merge sort advantages and disadvantages

Welcome to solsarin, Keep reading and find the answer about “merge sort advantages and disadvantages”

Merge Sort, an explanation of it

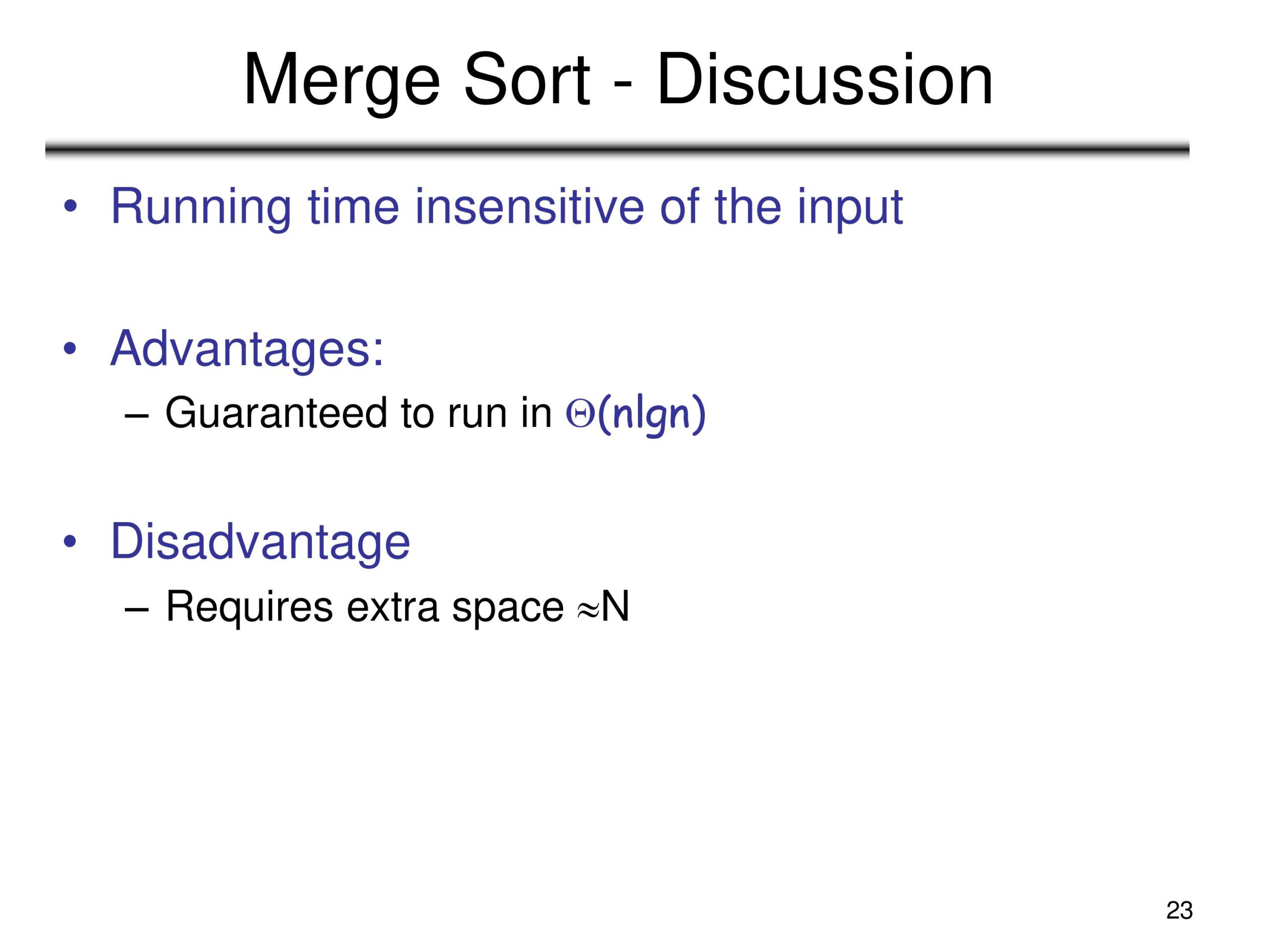

What is Merge Sort? How does it work? Actual recursion vs. what I thought was recursion. An example of Merge Sort. What are the advantages and disadvantages?

I’m currently taking the Udemy class JavaScript Algorithm and Data Structures Masterclass by Colt Steele. Which I would recommend to anyone wanting to learn more about algorithms in Javascript. One of the many subjects is about sorting algorithms. One, in particular, I will be discussing today is Merge Sort.

What is Merge Sort?

Merge Sort is a sorting algorithm. According to Wikipedia, it was invented by John von Neumann way back in 1945. It is a divide and conquers algorithm. Divide and conquer is an algorithm design pattern wherein you take the input and divide it recursively into subproblems until it is simple enough to solve. By utilizing that design pattern, the time complexity is dramatically improved to O(n log n) vs. other sorting algorithms such as Bubble Sort O(n²). In other words, it’s way faster when it comes to large datasets than simpler sorting algorithms.

How does it work?

In the above diagram, you can see a visual representation of how the algorithm works. The algorithm takes an input, it can be of various data types, but we will roll with Arrays for now. The input is then recursively divided until you have a set of Arrays containing only 1 element each. Once you’re at this stage, you then apply your sorting logic. In the example above, since we are dealing with integers, it is just a simple comparison of array1 and array 2. Then you merge the sorted numbers into a merged Array of two numbers. Next, you continue to recursively sort and merge the Arrays till you only have one sorted Array.

A side note about recursion

So before starting the course for Javascript Algorithms and Data Structures Masterclass. I thought I knew what recursion was. To a degree, I was correct in my perception. It’s a function that calls itself within the function. My typical recursive function would be something like this:

Well, it turns out true recursion does take fewer lines of code but conceptually is a little harder to comprehend. The only reason I’m mentioning it here is that recursion is a key component to Merge Sort.

Well, it turns out true recursion does take fewer lines of code but conceptually is a little harder to comprehend. The only reason I’m mentioning it here is that recursion is a key component to Merge Sort.

An example and explanation of Merge Sort

Okay, so now onto an actual example and an explanation of what’s going on. Typically, when you utilize Merge Sort, it would be with a huge dataset, but we will use an array of four random integers.

Firstly, we need a helper function.

As I previously mentioned, the basis of Merge Sort is to divide then merge sorted arrays. So we need a helper function to merge all those arrays. It will be agnostic about the length of our arrays. All it cares about is that we input two arrays into it.

So in the above helper function, the code takes in two arrays of whatever lengths, then compares the values sequentially of array-1 and array-2. One thing to note is that for this function to work, our arrays need to be sorted. This is already done because, as mentioned before, we have taken our unsorted Array and broken it down to singular elements within each array before executing this function.

Now the secret sauce.

If you’re not aware/comfortable with true recursion, as mentioned above, this function may be a bit of a head-scratcher. I’ll try to walk you through what’s happening in hopes of making it make more sense to you.

What is Merge Sort Algorithm: How does it work, its Advantages and Disadvantages

The “Merge Sort” uses a recursive algorithm to achieve its results. The divide-and-conquer algorithm breaks down a big problem into smaller, more manageable pieces that look similar to the initial problem. It then solves these subproblems recursively and puts their solutions together to solve the original problem.

By the end of this tutorial, you will have a better understanding of the technical fundamentals of the “Merge Sort” with all the necessary details, along with practical examples.

Merge sort

In computer science, merge sort (also commonly spelled as mergesort) is an efficient, general-purpose, and comparison-based sorting algorithm. Most implementations produce a stable sort, which means that the order of equal elements is the same in the input and output. Merge sort is a divide and conquer algorithm that was invented by John von Neumann in 1945. A detailed description and analysis of the bottom-up merge sort appeared in a report by Goldstine and von Neumann as early as 1948.

Algorithm

Conceptually, a merge sort works as follows:

- Divide the unsorted list into n sublists, each containing one element (a list of one element is considered sorted).

- Repeatedly merge sublists to produce new sorted sublists until there is only one sublist remaining. This will be the sorted list.

Top-down implementation

Example C-like code using indices for top-down merge sort algorithm that recursively splits the list (called runs in this example) into sublists until sublist size is 1, then merges those sublists to produce a sorted list. The copy back step is avoided by alternating the direction of the merge with each level of recursion (except for an initial one-time copy). To help understand this, consider an array with two elements. The elements are copied to B[], then merged back to A[]. If there are four elements when the bottom of the recursion level is reached, a single element runs from A[] are merged to B[], and then at the next higher level of recursion, those two-element runs are merged to A[]. This pattern continues with each level of recursion.

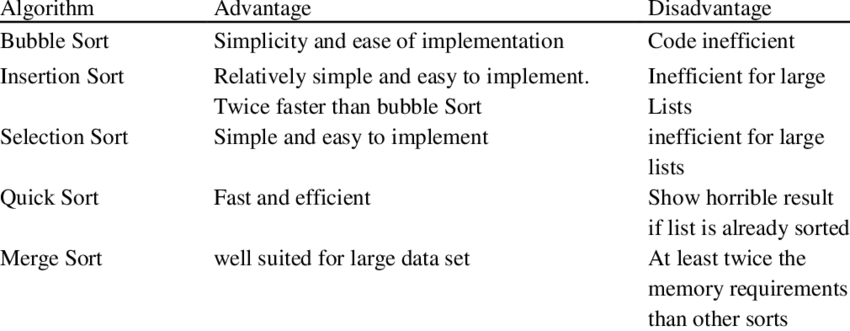

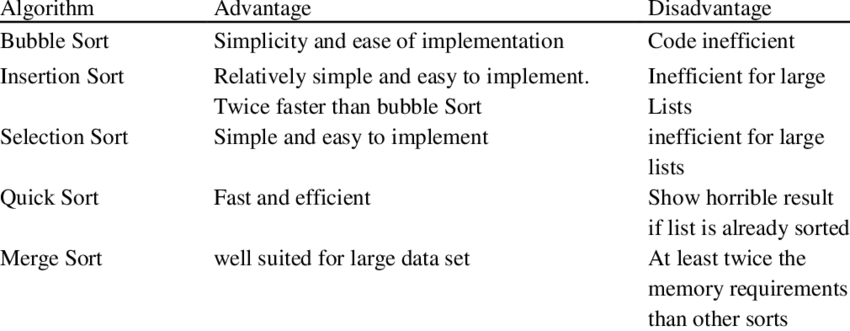

The Advantages & Disadvantages of Sorting Algorithms

Sorting a set of items in a list is a task that occurs often in computer programming. Often, a human can perform this task intuitively. However, a computer program has to follow a sequence of exact instructions to accomplish this. This sequence of instructions is called an algorithm. A sorting algorithm is a method that can be used to place a list of unordered items into an ordered sequence. The sequence of order is determined by a key. Various sorting algorithms exist, and they differ in terms of their efficiency and performance. Some important and well-known sorting algorithms are the bubble sort, the selection sort, the insertion sort, and the quick sort.

Bubble Sort

The bubble sort algorithm works by repeatedly swapping adjacent elements that are not in order until the whole list of items is in sequence. In this way, items can be seen as bubbling up the list according to their key values.

The primary advantage of the bubble sort is that it is popular and easy to implement. Furthermore, in the bubble sort, elements are swapped in place without using additional temporary storage, so the space requirement is at a minimum. The main disadvantage of the bubble sort is the fact that it does not deal well with a list containing a huge number of items. This is because the bubble sort requires n-squared processing steps for every n number of elements to be sorted. As such, the bubble sort is mostly suitable for academic teaching but not for real-life applications.

Selection Sort

The selection sort works by repeatedly going through the list of items, each time selecting an item according to its ordering and placing it in the correct position in the sequence.

The main advantage of the selection sort is that it performs well on a small list. Furthermore, because it is an in-place sorting algorithm, no additional temporary storage is required beyond what is needed to hold the original list. The primary disadvantage of the selection sort is its poor efficiency when dealing with a huge list of items. Similar to the bubble sort, the selection sort requires an n-squared number of steps for sorting n elements. Additionally, its performance is easily influenced by the initial ordering of the items before the sorting process. Because of this, the selection sort is only suitable for a list of a few elements that are in random order.

Insertion Sort

The insertion sorts repeatedly scan the list of items, each time inserting the item in the unordered sequence into its correct position.

The main advantage of the insertion sort is its simplicity. It also exhibits a good performance when dealing with a small list. The insertion sort is an in-place sorting algorithm so the space requirement is minimal. The disadvantage of the insertion sort is that it does not perform as well as other, better sorting algorithms. With n-squared steps required for every n element to be sorted, the insertion sort does not deal well with a huge list. Therefore, the insertion sort is particularly useful only when sorting a list of a few items.

Quick Sort

The quicksort works on the divide-and-conquer principle. First, it partitions the list of items into two sublists based on a pivot element. All elements in the first sublist are arranged to be smaller than the pivot, while all elements in the second sublist are arranged to be larger than the pivot. The same partitioning and arranging process is performed repeatedly on the resulting sublists until the whole list of items is sorted.

The quicksort is regarded as the best sorting algorithm. This is because of its significant advantage in terms of efficiency because it is able to deal well with a huge list of items. Because it sorts in place, no additional storage is required as well. The slight disadvantage of quick sort is that its worst-case performance is similar to average performances of the bubble, insertion or selections sorts. In general, the quick sort produces the most effective and widely used method of sorting a list of any item size.